There exists no formal definition of “the metaverse,” and no one individual or organization controls it. The metaverse is bigger than a single application, and it doesn’t look, feel, play, or sound like any one thing. It’s best to define the metaverse as a collection of organizations, tools, applications, and hardware, all of which serve a similar purpose.

At the end of this post, I’ll introduce my formal definition of the word “metaverse.” For now: The metaverse is the current generation of the Internet. We’re already there, and we’ve been there. We’ve been working, playing, and connecting within the metaverse for many years.

This post serves to be the starting point for a discussion about the metaverse as it exists in December 2022. The opinions that I express in this piece are my own, and are not a reflection of the opinions of my current, past, or future employers.

In this document:

- I’ll first explore the concept of identity as it relates to the metaverse.

- After that, I’ll enumerate several dozen notable metaverse-related organizations, software, and hardware.

- Next, I’ll introduce and discuss some high-level concepts regarding the metaverse.

- I’ll reveal more of who I am and how I’ve contributed to the metaverse.

- Finally, I’ll talk more about the formal definition of “metaverse.”

Let’s get started.

Table of Contents

People regularly have different definitions of words – your definition of “metaverse” may be different from mine.

Identity, Self-Expression, and Avatars in the Metaverse

We split our personal identities across dozens of common metaverse platforms such as Discord, Fortnite, and Google. We rarely stop to think about what that means and how it relates to our identities outside the metaverse.

Here are some parts of my personal identity:

- I am hundreds of individual user accounts across thousands of websites.

- I am a website.

- I am text within a Discord window, Slack message, and blog post on A Friendly Fox.

- I am source code in a text editor.

- I am a 640x360pixel rectangle within a Zoom window.

- I am ghosts within the Wayback Machine.

- I am an avatar in a virtual world:

- In one app, I look like my physical, Earthly form, because that form stood in a 3D scanner and produced a photorealistic computer model.

- In that same app, at a different moment in time, I look like Klonoa, the main character from one of my favorite childhood games.

- In another app, I am a static black-and-white photo inside a circular frame.

- In a different app, I am Ana Amari, an Egyptian bounty hunter offering critical support to her team.

The ways that we choose to express ourselves online offer important insights into our identity.

The inverse is also true: When we thoughtfully consider who we are, the ways we choose to express ourselves online changes.

In an increasingly-online world, how can platform developers give people the opportunities to be seen the ways they want to be seen? The current fad is to give people realistic 3D humanoid avatars and have them run around a virtual world. While this is psychologically and visually attractive, it’s important to understand the limitations of that expression of identity, and when it’s appropriate to represent users in that way.

Often, I can’t accurately represent who I am online due to limitations of a given platform. For example, I feel very uncomfortable with my webcam turned on in Zoom meetings unless I am actively speaking. I feel uncomfortable with video because my eyes and face and hands are often busy with other tasks while I’m listening to a speaker – I might be taking notes or doing external research – and I don’t want anyone else on my video call to be questioning or distracted by what works best for me. I’d much rather be represented visually some other way most of the time.

Here are some questions for you to ponder after you’ve finished reading this post:

- How do you currently represent yourself in the metaverse?

- How do you want to represent yourself in the metaverse?

- What are some of the differences between the ways you represent yourself inside and outside the metaverse?

Notable Metaverse Organizations

Companies detailed here are notable for their presence in the metaverse beyond a single specific piece of hardware or software. Each organization has its own header, and I’ve listed significant contributions made by that organization underneath that header.

I’ve written just a few sentences about each significant contribution, in this document, but I could write entire posts about each of them.

Epic Games

Epic is a $32 billion dollar private company headquartered in North Carolina and owned in large part by China’s Tencent. Epic has an astronomical impact on the metaverse. Tim Sweeney, Epic’s founder and majority shareholder, knows this to be true. He also knows how important it is to have some control over the metaverse, and has talked at length about Epic’s metaverse strategy.

On July 30, 2019, I attended a SIGGRAPH talk by Tim Sweeney titled “THRIVE: A Special Talk With Epic Games’ Tim Sweeney: Foundational Principles & Technologies for the Metaverse.” Tim’s talk was fantastic, and he shared dozens of nuggets of wisdom that are still very applicable today.

You can download my detailed notes from this talk here:

I disagree with Tim’s 2019 definition of the Metaverse, which I believe to be too limiting. I wonder how his definition has changed since 2019.

I love this nugget and how it relates to High Fidelity and Croquet, two companies with whom I’ve worked:

Need standards for a network architecture. Currently, client/server architecture is king. Client is a single machine representing an owner, server is a source of truth. We _want_ one huge, shared world that everyone can participate in seamlessly. Clearly requires more than one server - so we need standards for client/server and server/server comms. One possible model is a federated model, like email. Another model is decentralized: Anyone can add their hardware power to add to the game world.

I highly encourage you to read my full notes.

Fortnite

There is no other application in which someone can control Goku in a lightsaber duel against Rick Sanchez in an attempt to win $1 million from MrBeast.

- Goku is from Dragon Ball Z

- Lightsabers are from Star Wars

- Rick Sanchez is from Rick and Morty

- MrBeast is… MrBeast

Fortnite uses the latest rendering tech offered by Unreal Engine 5.1 and is playable on PC, MacOS, PS4, PS5, Xbox One, Xbox One S, Xbox One X, Nintendo Switch, Android, and Nvidia Geforce Now (but not natively on iOS, because Epic is constantly battling Apple).

Fortnite launched in September 2017 and still earns Epic Games millions of dollars per day in revenue.

Epic is winning the metaverse, and Fortnite is the reason why.

Unreal Engine 5.1

UE is one of the few dominant app engines used to create immersive experiences.

Recently, Unreal has also been used in virtual film production. Computers are now powerful enough to render photorealistic environments in real time and display them on massive, high-resolution screens behind live actors on a movie set. Check it out:

Unreal Engine is a content creation tool. The more people who use it – including people from outside “traditional metaverse industries,” and including those who learn how to use it because they played Fortnite – the more power Epic has to control the metaverse.

Verse

Some recent Epic news: Epic Games Launches New Web3 Programming Language, Verse

It’s unclear to me what kind of real impact Verse will have on the software industry. Also: what the hell is a “Web3 programming language?” Regardless, Epic putting their muscle behind a new language “for the metaverse” is meaningful.

Google Account

Ubiquitous, your Google account gives you access to world-class email, photo storage, document editors, and more. It also acts as a sign-in method for thousands of websites and apps.

Google Chrome (and Chromebook hardware)

The dominant Web browser (and some less-dominant, inexpensive hardware for browsing the Web).

YouTube & YouTube Gaming

The essential video hosting and video streaming website.

Google paid Ludwig, a popular Twitch streamer, millions of dollars to switch to YouTube Gaming in November 2021.

Stadia (RIP)

Stadia itself was not a bad idea. Cloud devices will always be far faster than local devices, and Stadia was an experiment in how to use those cloud devices in a “new” way. (I put “new” in quotes because OnLive was doing what Stadia did in 2009.)

For rendering an entire scene and streaming that scene to users, using cloud devices is not a good idea, because we haven’t figured out how to circumvent physics (latency). Low latency is critical for many types of games. However, for rendering components of a game – mixed 3D audio, or lightmaps, for example – then streaming those components to clients…that will be a big deal.

The Amazons, Googles, and Microsofts of the world will always be able to afford far, far more powerful hardware than you or me at home. They’ll always be able to, either directly or indirectly, sell that compute (3D rendering or otherwise) to clients.

Mozilla

Mozilla Firefox

The Web browser made by a company which pledges to build a healthy Internet.

Mozilla Hubs

A whole new world, from the comfort of your home. Take control of your online communities with a fully open source virtual world platform that you can make your own.

A better way to connect online: No more videos in a grid of squares. Gather with your community online as avatars in a virtual space and communicate more naturally — no headset required.

https://hubs.mozilla.com

Meta

Meta Horizon Worlds

Horizon Worlds isn’t working out very well for Meta:

https://9to5mac.com/2022/10/07/horizon-worlds/

That’s OK for them. Meta has other metaverse apps, like Instagram and WhatsApp and Facebook.

Meta Quest 2

Currently, the best VR headset for most people is the Quest 2, the cost of which Meta is heavily subsidizing; Meta’s Reality Labs has lost $9 billion as of October 2022.

Meta Quest Pro

The Quest Pro is Meta’s newest mixed reality headset, having launched on October 25, 2022 at $1,500. Its processor is fast enough and each of its cameras is now high-resolution enough to support full color passthrough, allowing best-in-class interaction with and augmentation of real-world objects.

Apple

iPhone & iPad

ARKit is the best way we currently have to view augmented reality, and ARKit only runs on iOS devices.

AirPods

AirPods in Transparency mode is the best way we currently have to hear augmented reality.

Safari/WebKit

The third major browser outside of Chrome and Firefox.

Unreleased Apple AR Glasses

These glasses, when released, will become the best way to view and hear augmented reality.

Microsoft

Minecraft

In September 2014, Microsoft bought Mojang (Minecraft’s developers) for $2.5 billion. I believe that this was a phenomenal deal for Microsoft.

Minecraft isn’t just a game; it’s also an education platform. The kids who play Minecraft today are learning computer science concepts with its redstone blocks.

People are buying “Microsoft Realms,” paying Microsoft real money (not crypto) monthly to have their own space in the metaverse.

Minecraft has hundreds of thousands of daily active users over ten years after its original release date, and it’s available on several computing platforms, including mobile and native VR.

AltspaceVR

I haven’t heard much about AltspaceVR recently, but it’s still around. It’s like Hubs, but Microsoft.

HoloLens

The dominant AR glasses for enterprise. HoloLens 2 quietly began shipping in September 2019.

Microsoft is supplying HoloLens technology to the US military.

Edge

Now built on Chromium, Edge is Microsoft’s cross-platform Web browser.

Xbox

Microsoft’s video games hardware platform has been around since November 2001 and has been growing, remaining competitive against Nintendo’s Switch and Sony’s PlayStation platforms.

All the Game Developers Under Microsoft

https://www.pcgamer.com/every-game-and-studio-microsoft-now-owns

Microsoft employs thousands of PMs, artists, programmers, QA engineers, office managers, and other workers who are all making video games. They’re all building content for the metaverse.

Snap

One of the most popular AR applications is Snapchat.

Snap’s Lens Studio is some of the best developer tooling for building AR experiences.

Amazon

Twitch

Twitch Chat is a metaverse in and of itself. It has its own language. (PogChamp)

Have you ever experienced Twitch Chat on a stream watched by thousands? If not, you haven’t yet lived.

O3DE

The Open 3D Engine, borne from Amazon Lumberyard, is an open-source 3D game engine.

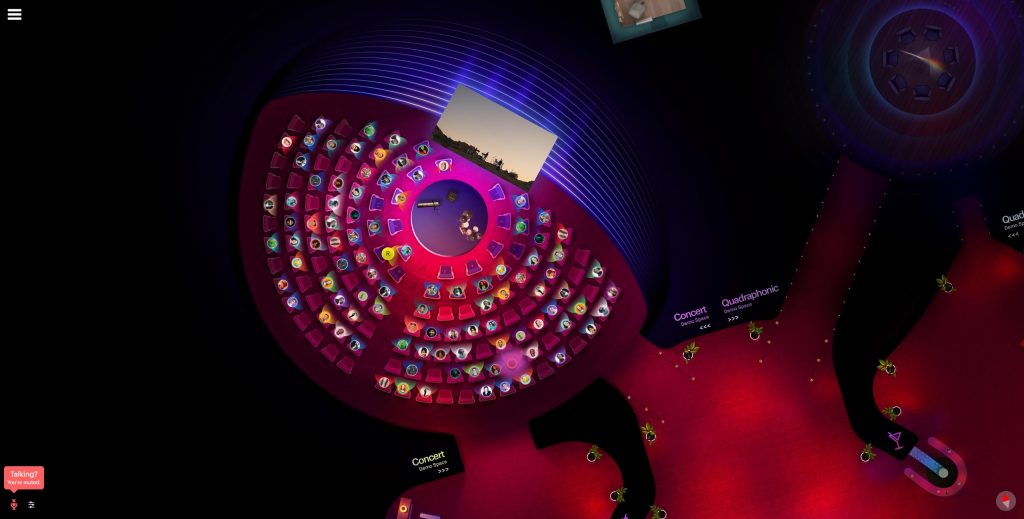

Croquet

In traditional multi-user applications, the server owns the application state. In contrast, Croquet OS employs a replicated computation model, where each client simulates a bit-identical, shared truth.

This means that everyone using an app built on top of Croquet OS sees the exact same thing as everyone else. Things as complicated as fluid dynamic simulations are exactly the same, across all clients, always.

Croquet’s technology enables a broad array of low-latency, multi-user applications far beyond gaming. Developers can write their code as if it were for a single user, and their app becomes multi-user, automatically.

Croquet OS

Here’s an amazing demo of this technology: Click this link on two different machines. Notice that you see exactly the same thing on all of your systems, even as you modify the environment with the tools (they are in the top left).

After adding 500 moving bots to the simulation, the bandwidth cost of that simulation is about 7.5 kilobytes per second. Remember – the properties of those bots, like their position and behavior, are synchronized perfectly across every single client with zero judder. This completely blows my mind.

Croquet World Builder

The Microverse World Builder is a a development platform for the Open Metaverse that extends the next generation of Web and Mobile. It is built on the existing infrastructure of the web, which web developers are already familiar with.

https://croquet.io/microverse-builder/

World Builder is built on top of Croquet OS, and shows off many of COS’ core features.

Croquet Web Showcase

Web Showcase is a low-code solution for integrating a metaverse world into existing 2D websites. It is a layer on top of World Builder and is another tool for introducing developers into Croquet OS.

Qualcomm

Qualcomm’s Snapdragon hardware powers a huge number of mobile devices, including XR devices. The limitations of Qualcomm’s hardware defines the limitations of metaverse software.

Valve

Steam

Steam is a digital distribution service and storefront. Valve mostly sells games and DLC on Steam, but people can also buy apps and utilities there.

As of writing this text, there are 28 million people logged in to Steam. Steam is the entry point to the metaverse for a huge number of people.

Valve takes a 30% cut of all purchases from Steam.

Valve Index

Valve’s June 2019 VR headset with finger-tracking controllers is among the best ways to experience tethered PCVR.

HTC Vive 1

The HTC Vive and the Oculus Rift were the first two major headsets to hit the consumer PCVR market in early 2016.

HTC and Valve collaborated on the Vive.

Adobe

Adobe Creative Suite

CS is still the dominant creative suite of apps used to author creative content, including photos, illustrations, videos, and layout.

Adobe Substance 3D

A part of the Adobe’s app portfolio, Substance 3D is a suite of apps used to author and modify 3D content. Its ease-of-use makes it very popular.

Sony

PlayStation VR

Sony’s foot in the virtual reality industry, PlayStation VR is a virtual reality headset for the PS4 and PS5.

PSVR2 will release in February 2023.

Nvidia

Nvidia makes the most powerful graphics processing units today. Their GPUs dominate the Steam Hardware Survey, beating AMD’s GPU market share 75% to 15%.

Nvidia’s CEO Jensen Huang has strong opinions about the metaverse:

https://blogs.nvidia.com/blog/2021/08/10/what-is-the-metaverse/

Niantic

Niantic are the creators of the massively popular AR app Pokemon Go.

Notable Metaverse Standards Organizations

Khronos Group

Khronos is responsible for developing royalty-free, open standards for 3D graphics, virtual and augmented reality, parallel computing, machine learning, and vision processing.

Some of those standards include OpenGL, OpenGL ES, OpenXR, WebGL, Vulkan, OpenCL and glTF.

The top “promoter members” of Khronos are AMD, Apple, Arm, Epic, Google, Huawei, IKEA, Imagination, Intel, Nvidia, Qualcomm, Samsung, Sony, Valve, and Veri Silicon.

World Wide Web Consortium (W3C)

The W3C creates standards for the Web in domains such as visual design, Web app design, web architecture, accessibility, internationalization, security, and privacy. This makes the W3C a very powerful consortium.

Some of these standards include XMLHttpRequest, the Geolocation API, WebAudio, the PNG image format, WebRTC, HTTP, XML, and CSS.

Tim Berners-Lee, the inventor of the World Wide Web, leads the W3C. Anyone can join. Some of its 465 member companies are Amazon, Google, Microsoft, Shopify, Disney, Wix, Yahoo, Zoom, AT&T, and Verizon.

It is up to browser developers to implement the W3C’s standards themselves. I have opinions about this; see “Web Standards.”

Metaverse Standards Forum

The MSF is “where leading standards organizations and companies cooperate to foster interoperability standards for an open metaverse.”

One example of the kind of projects the Forum will host is to “exercise the 3D asset workflow from authoring to runtime rendering in multiple engines.” From the MSF website, it doesn’t look like such an exercise has happened yet. I’d love to see the results.

Founding members of the MSF include Khronos, Adobe, Epic, Meta, Nvidia, Qualcomm, Unity, Wayfair, and the W3C.

Open Metaverse Interoperability Group

The Open Metaverse Interoperability Group is focused on bridging virtual worlds by designing and promoting protocols for identity, social graphs, inventory, and more. Our members include businesses and individuals working towards this common goal. Aside from technical work, OMI aims to create a community of artists, creators, developers, and other innovators to discuss and explore concepts surrounding the design and development of virtual worlds.

https://omigroup.org/

Notable Metaverse Creation Tools

If I’ve listed a tool above under Notable Metaverse Organizations, it won’t appear in this list.

Unity

One of the most popular game engines.

Blender

A set of free and open-source 3D computer graphics software tools. Massively popular.

Autodesk 3ds Max

Like Blender, but not free.

Ready Player Me

A tool used to create 3D avatars for use in virtual worlds.

Notable Metaverse Applications

The lines between “metaverse creation tools” and “metaverse applications” are blurry, since many of the most powerful apps include tools which allow users to generate and modify content.

If I’ve listed an application above under Notable Metaverse Organizations, it won’t appear in this list.

Discord

Discord isn’t just a text chat platform – it’s a way to build and scale communities between one user and nearly one million users. People can share text, videos, memes, images, voice clips, emoji, livestreams, “activities,” and so much more via this platform that is no longer just for gamers. Recently, Discord added a way for creators to monetize their servers.

Modern metaverse application developers need to figure out how to integrate Discord into their development and marketing strategy.

In April 2021, Discord rejected Microsoft’s $12 billion purchase bid. Discord knows what they have, which is a critical piece of metaverse technology worth far more than that amount.

Slack

With 18 million daily active users (source, but accuracy unknown), Slack is a critical way for hundreds of thousands of organizations to connect and build. It’s common for other metaverse companies to use Slack to communicate instead of using the applications they’re building. Why do you think that is?

Zoom

Zoom saw a meteoric rise during the pandemic as the video chatting platform.

Roblox

Roblox is teaching millions of children how to make games. The kids who grow up on tools like Roblox and Minecraft are going to express themselves through these tools like these through their teenage and adult years.

VRChat

VRChat’s avatars are, by far, the best in the metaverse industry. They’re extremely customizable with unique physics and scripted behaviors. Players commission artists to build highly detailed avatars.

VRChat avatars and environments are modeled in any software which exports the .FBX format, and primarily finished in Unity.

Second Life

Second Life launched in June of 2003, which is pretty incredible. Second Life’s worlds and economy are still going strong.

High Fidelity

“Open-source, shared social VR” was HiFi’s first slogan, and it delivered on that promise – at first.

WordPress

WordPress is a platform used by 43% of all websites to easily disseminate information and host content, mostly in 2D.

Notable Metaverse Hardware

If I’ve listed a piece of hardware above under Notable Metaverse Organizations, it won’t appear in this list.

Magic Leap

Magic Leap raised $3.5 billion dollars based off of a promise that they could deliver a blue whale. In 2018, they launched funny-looking AR goggles with a tiny FOV and no specific target market, expecting developers to create an app ecosystem on their own. There was no blue whale.

In September 2022, Magic Leap launched their Magic Leap 2 headset, and it’s competing with Microsoft’s HoloLens in the enterprise space.

AI-Generated Text and Visual Art

Tools like DALL-E, Midjourney, Stable Diffusion, and GPT-3 have unique places in the metaverse. They are already used to create high-quality content cheaply and quickly. Today, these applications create 2D content. Soon, AI apps will be building 3D content for use in digital media, including movies, games, and social apps.

As users of these tools, we need to be mindful of the artists whose work was taken to train the AI datasets. It’s not enough to pay software engineers to run these tools; we must also support the humans who create original visual art (and who can actually draw hands, unlike the AIs).

AI-generated content still has significant limitations, especially when observed closely. These tools will be used for a while as a starting point for artists and developers, not as a final product.

Content Interoperability

One commonly-shared goal of metaverse technologies is to ensure content is portable between platforms and applications. Standards-based metaverse development is highly important; we cannot allow a single entity to be in charge of the way developers create content like 3D models or scripted behaviors.

One example of a standard that seems to be propagating well throughout the metaverse is the extensible 3D asset file format, glTF. Content authoring tools such as Blender can export models in glTF. WebGL engines such as Three.js and A-Frame can read glTF files. Game engines such as Godot natively support glTF models. You can read more about the Khronos-created format on Wikipedia here.

Web Standards

Another important area of content interoperability in the metaverse pertains to browser standards. Web browsers are now ubiquitous, and they are installed on billions of devices. Whoever controls the browser with the most users has an outsized impact on the way Web standards are interpreted and implemented.

The word “interpreted” is key here: groups like the W3C don’t write the code implementing Web standards, they just write the standards themselves. It’s up to the developers who work for companies like Google to implement the W3C’s standards to the letter. Sometimes, human beings misinterpret language, and two developers might interpret (and thus implement) the standard differently. Other times, browser engine developer may decide to simply not support certain Web standards.

Remember when Chrome was new, and websites that worked in Chrome simply didn’t load in Internet Explorer? This happened in part because Google chose to implement newer and experimental standards into their then-new Chrome browser. After that, Google promoted Chrome hard to its massive Google Search audience – and it worked. Millions of people installed Chrome, thousands of websites were developed specifically for Chrome, and browsers like IE suffered. We’re still seeing the impact of that history today.

On January 15, 2020, Microsoft finally gave up maintaining their own browser and switched its Edge browser’s internal engine to Chromium, the same engine which powers Google Chrome. This means that the Chromium browser engine is by far the dominant browser engine, and webapps which don’t work with Chromium lose huge market share.

Browsers like Safari and Firefox are left at a huge disadvantage if the developers controlling them don’t interpret or implement Web standards in the same way that Chrome does. Users of Safari and Firefox are often left frustrated because the websites they use don’t work properly. Developers of Web applications lament the time spent debugging issues that only occur on one browsing platform.

User-Generated Content and Licensing Terms

All companies dealing in metaverse technologies have a responsibility to ensure that their tech is used “appropriately,” for some definition of “appropriate.” As it becomes easier for users to serve content from hosted platforms, or fork and deploy open-source code, organizations need to be aware of how their platforms and code are used.

Web3

Similar to “the metaverse,” there’s no singular definition of “Web3,” but the two terms are inextricably linked. Discussions about Web3 usually involve “decentralization” and “blockchain.” “Web3 technologies” generally include one or more of:

- Cryptocurrencies like Bitcoin, Dogecoin, or Ethereum

- Decentralized autonomous organizations (DAOs)

- Non-fungible tokens (NFTs)

- Smart contracts

The idea of a decentralized Internet is extremely compelling. Content creators are more aware than ever of the power and control that centralized companies like Meta, Google, Amazon, and ByteDance have over their data. When my photography account was mistakenly banned from Instagram, I felt a fraction of the fear that creators whose livelihoods are based on social media fear when their accounts get banned.

However, there are big, unsolved problems with concepts lumped in with “Web3”, including:

- Most users don’t want to take care of their private keys. This yields centralized services which take care of keys on behalf of the users.

- Related: Most users don’t understand the consequences of losing their private keys. Users expect companies to be able to help them when they forget their passwords, and that concept doesn’t translate to users being their own key custodians.

- Most people don’t want to learn technical concepts to share or view content meaningful to them.

- All platforms beyond a certain size which allow for user-generated content will face challenges with content moderation.

- A significant percentage of “Web3” projects are scams that involve stealing money or content from unsuspecting users and artists.

Recommended Reading

Molly White, a software engineer, Wikipedia editor, and Web researcher, writes extensively about Web3 and its related technologies. She’s the author of the site “Web3 is Going Just Great” (https://web3isgoinggreat.com/), a site which tracks “some examples of how things in the blockchains/crypto/web3 technology space aren’t actually going as well as its proponents might like you to believe.”

Molly also writes essays about “the blockchain,” a collection of which you can find here: https://blog.mollywhite.net/blockchain/.

She is my favorite resource for explaining Web3 and blockchain (for some definition of those words) in understandable terms, and I highly recommend you check out her work. This post/video is a great place to start.

Miscellaneous Concepts

Any one of these list elements could end up as their own posts.

- Software development at any scale is hard. Building products is hard. Human communication is hard.

- One team could work for months on “small” metaverse projects, such as a collaborative whiteboard.

- Rushing small projects jeopardizes large projects.

- Users will not forgive you if a new experience isn’t perfect unless they already understand your value.

- This also applies to the developer experience.

- Understanding, celebrating, and publicly embracing neurodiversity yields massive, priceless benefits.

- Diversity, equity, and inclusion are not buzzwords – they are concepts with real impact. How is your metaverse organization approaching DE&I?

- Most people have communication styles which differ in key ways across contexts. For example, at work, some people prefer synchronous meetings, while others get significantly more value from text communication.

Be aware of the communication styles that work best for you, for each of your team members, and outside your organization.- For me, in the “work | not urgent” context, I vastly prefer real-time text chat to most other forms of communication, because I’m able to more carefully consider ideas before responding to or committing them, and because there’s less pressure for me to be “on” in a certain way.

Who is Zach Fox?

Hello. ☺️ My parents named me Zach. When I was 12 years old, I named my online identity Valefox, which is a name I still use frequently today. I am a nonbinary person, leaning masculine. My pronouns are he/him. I am always exploring my identity.

I was born in 1992, the same year that Neal Stephenson published Snow Crash and coined the term “metaverse.” One of my first spoken phrases was “floppy disk,” and I’ve been exposed to the Internet since before I can remember.

My father started working with computers in the early 1980s while he was in the Air Force. Later, he became an Instructor at Global Knowledge, helping people understand the fundamentals of network security using Cisco hardware.

My mother stayed home to take care of my sister and me through our teenage years, returning to work as an accounting tutor in the late 2000’s. Today, she works daily with students at Endicott College in Massachusetts.

I mention my parents here because they both instilled in me the importance of educating others in a patient, kind, understanding way, one where the teacher finds ways to meet the student. The technologies underlying the metaverse are not simple. The promises of these technologies can be and are abused for capitalistic gain at the expense of users’ mental health, physical health, and financial health.

I want to be part of a movement which uses modern technology to spread knowledge, enable deeper self-expression, and foster meaningful, authentic, vulnerable connection within the self and between human beings. The document you are currently reading is a part of me that I’ve published onto the Internet as a part of that movement.

I am lucky enough to have been a part of several metaverse-related projects throughout my professional career. These projects have helped define significant pieces of who I am:

Project Immersion

My 2015 Northeastern University capstone project, “Project: Immersion,” was an end-to-end solution for capturing wide-angle, 3D video with high-quality audio and displaying it in virtual reality (the Oculus Rift DK2). This project existed long before products like the GoPro Max or the Insta360 One were on the market.

High Fidelity

For five years and across four different roles, I worked at High Fidelity.

HiFi, led by Second Life co-founder Philip Rosedale, was an ambitious company that worked at an incredible, unsustainable speed to bring as many metaverse technologies as possible together under a single software ecosystem. It raised $70 million across several rounds of VC funding.

The technologies integrated into the open-source High Fidelity platform included:

- Industry-best spatial audio technology with ambisonic and near-field support

- The spatial audio tech HiFi developed has yet to be beaten by any other company. They’re still selling this technology via a JavaScript and Swift API. I wrote the code for the TypeScript and Swift client libraries for this API.

- Real-time, editable 3D worlds containing scripted content

- Virtual environments which could simultaneously host over 350 articulating avatars

- Support for the latest VR headsets and hand-tracking controllers, including Oculus Rift, HTC Vive, Windows MR headsets, Razer Hydra (remember those?), and experimental support for Oculus Quest 1

- An NFT marketplace, virtual currency (HFC), content protection, and a virtual shopping experience built off of the earliest version of the EOS blockchain (in February of 2017!)

- Thoughtful user privacy and safety features

- High-detail, scripted 3D avatars with support for arms and legs and full IK

- Support for Doob 3D-scanned photorealistic avatars

Here are some other features my team or I worked on at HiFi:

- Speech-to-text-to-speech universal translator

- A world where users could talk to GPT-3 and GPT-3 would respond via an Amazon Polly voice

- Text-to-Speech app with dozens of voices and languages

- “Spectator Cam” and “Tablet Cam Pro” apps for streaming and capturing in-world activities

- “Appreciate” app for expressing thanks to other users in-world with an “Appreciation Dodecahedron”

- Throwable 360 camera

- In-world emoji reactions

- Multi-user pixel grid (like Reddit’s /r/place)

Here are some flat and 360 photos that I captured from within High Fidelity:

Each employee had a custom avatar created using Wolf3D tech. Wolf3D built Ready Player Me.

Above: A 360 photo captured 2019-03-16 at one of HiFi’s many “Bingo Extremeo” events, where hundreds of avatars competed for HFC and real-world prizes.

You can interact with the embedded 360 photo with your mouse or finger.

Above: A 360 photo captured 2018-09-07 at a “Road to One Billion” load test event. At one point, 356 avatars were present in this space.

You can interact with the embedded 360 photo with your mouse or finger.

Above: A 360 photo captured 2019-03-16 at the “MultiCon” virtual event, which hosted trivia, an avatar contest, and a Q&A session with the MythBusters.

You can interact with the embedded 360 photo with your mouse or finger.

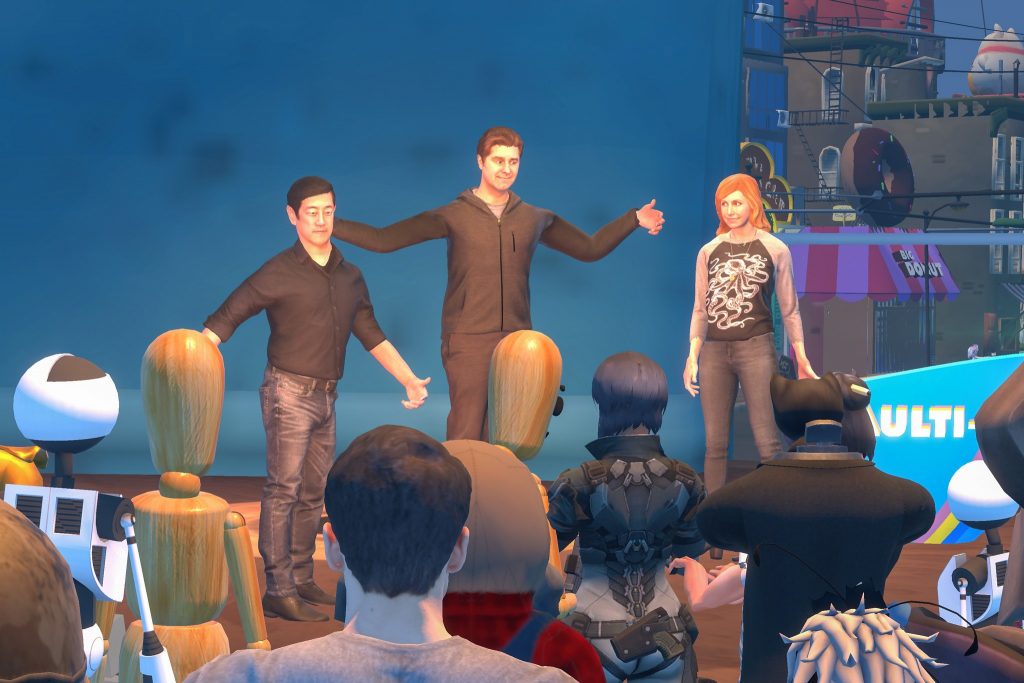

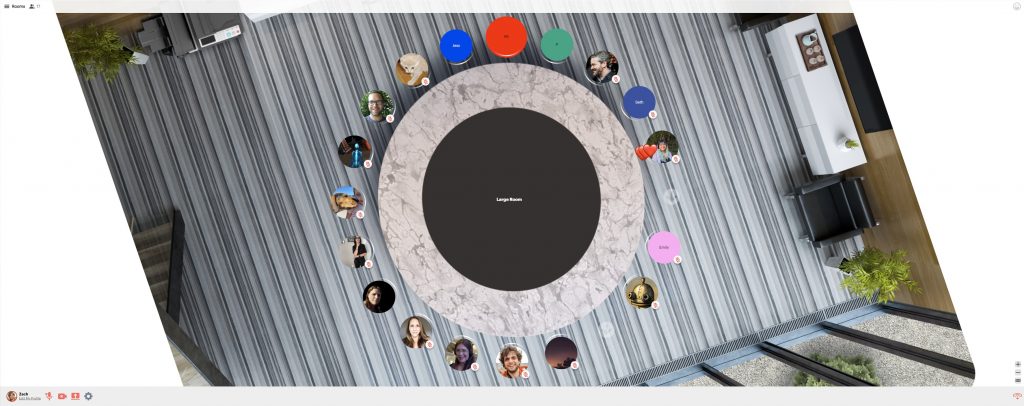

In 2020, High Fidelity completely switched gears and built a web-based, 2D metaverse application. In this app, hundreds of 2D avatars could congregate on a huge, beautiful canvas and communicate with the same amazing spatial audio technology used in the original HiFi platform.

The last iteration of this application was called “Spatial Standup,” and it integrated with Discord, Slack, and Microsoft Teams. I wrote all of the code for Spatial Standup.

Upon reflection, I am still blown away by the number of thoughtful features packed into both High Fidelity platforms, features that platforms today are trying to implement. Some of those platforms are making the same mistakes that HiFi did while implementing those features. There is so much to learn from High Fidelity.

Most importantly to me, I met my wife at High Fidelity. Liv is now a senior product leader at Mozilla, where she leads the Mozilla Hubs team. She is also a Product Advisor at Kai XR, a company which brings metaverse technologies to children in the classroom. Liv and I comprise a metaverse family. We’re huge nerds! 😎

Zach Fox Photography

Between September 2021 and November 2022, I focused on my photography business, Zach Fox Photography. I learned about myself in ways I couldn’t have otherwise.

I learned how to express myself more completely using Web technologies. My website contains projects and portfolios that are extensions of who I am and are only possible for me to present via code, like this photo essay of the time I was rescued from a mountain in Hawaii via helicopter, or this “Interactive Megazoom” of the Brooklyn Bridge.

Croquet

In late November 2022, I joined Croquet as a Developer Relations Engineer, where I am helping spread information about core metaverse technologies. Croquet has developed some foundational metaverse software, including Croquet OS (“The Operating System for the Metaverse”), which enables developers to quickly enable extremely robust multi-user features within their Web applications without having to write complicated netcode.

In addition to Croquet OS, we just recently launched Web Showcase, a product which lets website owners easily integrate a multi-user, customizable virtual environment into their existing website. This is a critical piece towards a goal of introducing metaverse technologies to more people.

I’m excited to be a DevRel Engineer at Croquet so that I can help people write code which helps people meaningfully connect to each other. I’m also excited to have a platform on which the metaverse can get to know me.

The Definition of “Metaverse”

Now, you have some idea of the apps and experiences that comprise the metaverse. We’ve also discussed some concepts adjacent to the metaverse, such as identity, interoperability, and Web3. So, can we formally define “metaverse”? I’ll give it a shot:

The metaverse is a social medium spanning space and time where humans create, share, and interact with content while exchanging attention, goods, and services.

Unfortunately, I think we need a different word that isn’t “metaverse”. Giant corporations are taking ownership of this word:

- Meta, formerly known as Facebook, is attempting to own the prefix “meta”

- Epic, with its recent launch of the Verse programming language, is attempting to own the suffix “verse”

It’s not OK that the first thing many people think of when they hear “metaverse” is a multi-billion dollar company whose main business model is selling advertisements.

We already have a great word to describe the ecosystem in which all of these apps and concepts live:

The Internet.

I think many people might reject that word’s scope as too broad. What do you think? Can we use “Internet” to replace the word “metaverse”?

How are you engaging with the metaverse? How do you wish the metaverse better suited your needs? Leave me a comment below or contact me:

zach dot fox at croquet dot io

Love,

Zach